AI Workstation

70B models at Q8. No compromises on VRAM or speed.

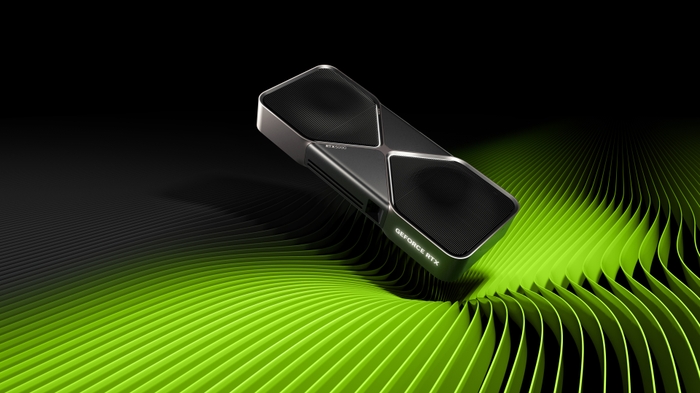

The AI Workstation is the most capable consumer-grade AI machine you can build in 2026. The RTX 5090's 32GB of GDDR7 with 1,792 GB/s bandwidth is a generational leap — it runs 70B models at 8-bit quantization entirely in VRAM, something the previous generation couldn't do. Paired with 96GB of system RAM, a 16-core CPU, and Gen5 storage, this build handles everything from production inference servers to training LoRA adapters to generating hundreds of images per hour. If you're asking 'What's the best AI PC money can buy?', this is it.

Why This Build

- +32GB GDDR7 finally breaks the 24GB consumer barrier — 70B models at Q8 fit entirely in VRAM

- +1,792 GB/s memory bandwidth means faster inference tokens/sec than any previous consumer GPU

- +5th-gen Tensor Cores with native FP8 support dramatically speed up inference

- +96GB system RAM — enough for massive datasets, multiple model instances, and full-stack AI development

- +16 cores handle data preprocessing, model serving, and development tools simultaneously

Parts & Why We Chose Them

The RTX 5090 is the most powerful consumer GPU ever made. 32GB GDDR7 at 1,792 GB/s — nothing else comes close for local AI inference. The street price premium over MSRP reflects real AI demand.

The Ryzen 9 9950X brings 16 cores and 32 threads — essential for data preprocessing, running inference servers, and development tools side by side. The 170W TDP is manageable with a good cooler.

96GB (2x48GB) DDR5 is 3x the GPU's VRAM. This is critical — when running multiple models or processing large datasets alongside inference, system RAM is your overflow. Don't go lower than 64GB with a 32GB GPU.

Gen5 NVMe at 12,400 MB/s. Loading a 70B Q8 model (~70GB) from disk takes about 6 seconds. On a SATA SSD it would take over a minute. For AI workstations, storage speed directly impacts workflow.

Fractal Design Meshify 2 — spacious mid-tower with 467mm GPU clearance (the 5090's 340mm fits easily), excellent airflow, and 6 x 3.5" drive bays for bulk storage expansion.

Corsair iCUE H150i Elite 360mm AIO — premium cooling for the 9950X's 170W TDP during sustained AI workloads. The 360mm radiator fits the Meshify 2's top mount.

1200W provides the headroom a 575W GPU demands. The 5090 can spike to 700W+ during transient loads. For 24/7 AI inference, PSU reliability matters — Corsair RM series is proven.

What You Can Run

Fast local chatbot for everyday questions, summarization, and simple coding tasks

Strong all-rounder — great for coding assistance, writing, and data analysis without needing a 70B model

Capable mid-size model — good balance of speed and intelligence for chat, code, and general tasks

Lightweight and fast — perfect for quick queries, text processing pipelines, and always-on local assistant

State-of-the-art image generation — photorealistic images, artistic styles, detailed compositions

Workhorse image generation — fast, well-supported, huge community of fine-tuned models and LoRAs

Latest Stable Diffusion architecture — better text rendering and composition than SDXL

High-quality text-to-video — generate 5-10 second video clips from text prompts, one of the best open-source video generators

Accessible video generation — create 6-second clips at 720p, good starting point for local video gen on mid-range GPUs

Smooth text-to-video — known for natural motion and good temporal consistency in generated clips

Lightweight video generation — the fastest and most accessible model, generates 5-second clips on 8GB+ GPUs

Image-to-video animation — takes a still image and generates a short animated video from it

High-quality text-to-video — competitive with commercial video generators, strong prompt following

Dedicated code completion and generation — supports 80+ programming languages

Best open-source coding model — handles complex refactoring, debugging, and full-file generation

Vision + language — analyze images, extract text from screenshots, describe charts and diagrams

Predict protein structures from amino acid sequences — the breakthrough that won the Nobel Prize, now runnable on your own hardware

Fast protein structure prediction from single sequences — no MSA needed, predictions in seconds instead of minutes

Protein language model for embeddings, function prediction, and variant effect analysis — the workhorse of computational biology

Single-cell RNA-seq foundation model — cell type annotation, perturbation prediction, and multi-batch integration without traditional pipelines

Design novel proteins through diffusion — generate binders, scaffold functional motifs, and create entirely new protein structures

Fine-tune your own custom 8B LLM — train on your data for domain-specific chat, coding, or analysis

Train custom SDXL LoRAs — add your own styles, characters, and concepts to image generation

Train FLUX LoRAs for state-of-the-art custom image generation — significantly better than SDXL

Tight Fit (May Need CPU Offload)

Run a frontier-class chatbot locally — comparable to ChatGPT for general knowledge, reasoning, and writing

Top-tier reasoning and math — handles complex analysis, long documents, and multi-step problem solving

Advanced reasoning model — excels at math, logic puzzles, and step-by-step problem solving

Fine-tune a frontier 70B model — QLoRA makes this possible on high-end consumer hardware

Trade-offs

- -The RTX 5090 street price is ~$2,800 — well above the $1,999 MSRP

- -575W TDP means serious power draw — expect $30-50/month in electricity for 24/7 inference

- -The card is 340mm and triple-slot — it dominates the case interior

- -Diminishing returns vs the Prosumer build for users who mostly run 8B–32B models

- -Still can't run 70B at FP16 (needs 140GB VRAM) — quantization is still required for the largest models

Ideal For

- +Running 70B models at Q8 quality (Llama 3.1 70B, Qwen 72B, DeepSeek R1)

- +Production inference serving

- +Fine-tuning up to 32B parameter models

- +Running multiple models simultaneously

- +FLUX image generation at full FP16 quality

- +Agentic AI workflows with local models

- +AI startup prototyping before scaling to cloud

Not Ideal For

- -Budget-conscious builders (the Prosumer build does 80% of this at 60% of the cost)

- -Pure gaming (the 5090 is overkill — a 5070 Ti is more cost-effective for gaming only)

- -Training models from scratch at scale (that's still cloud/data center territory)

Detailed Compatibility

See full VRAM analysis, setup instructions, and performance estimates for each model.